I’m spending much of this October as a resident fellow at the Bellagio Centre in Italy, taking part in a thematic month on Artificial Intelligence (AI). Besides working on some writings about the relationship between open standards for data and the evolving AI field, I’m trying to read around the subject more widely, and learn as much as I can from my fellow residents.

As the first of a likely series of ‘thinking aloud’ blog posts to try and capture reflections from reading and conversations, I’ve been exploring what Wittgenstein’s later language philosophy might add to conversations around AI.

Wittgenstein and technology

Wittgenstein’s philosophy of language, whilst hard to summarise in brief, might be conveyed through reference to a few of his key aphorisms. §43 of the Philosophical Investigations makes the key claim that: ”For a large class of cases–though not for all–in which we employ the word ‘meaning’ it can be defined thus: the meaning of a word is its use in the language.” But this does not lead to the idea that words can mean anything: rather, correct use of a word depends on its use being effective, and that in turn depends on a setting, or, as Wittgenstein terms it, a ‘language game’. In a language game participants have come to understand the rules, even if the rules are not clearly stated or entirely legible: we engage successfully in language games through learning the techniques of participation, acquired through a mix of instruction and of practice. Our participation in these language games is linked to the idea of ‘forms of life’, or, as it is put in §241 of the Philosophical Investigations, “It is what human beings say that is false and true; and they agree in the language they use. That is not agreement in opinions but in form of life.”.

As I understand it, one of the key ideas here can be expressed by stating that meaning is essentially social, and it is our behaviours and ways of acting, constrained by wider social and physical limits, that determine the ways in which meaning is made and remade.

Where does AI fit into this? Well in Wittgenstein as a Philosopher of Technology: Tool Use, Forms of Life, Technique, and a Transcendental Argument, Coeckelbergh & Funk (2018) draw on Wittgenstein’s tool metaphors (and professional history as an engineer as well as philosopher) to show that we can apply a Wittgensteinian analysis to technologies, explaining that: that “we can only understand technologies in and from their use, that is, in technological practice which is also culture-in-practice.” (p 178) . At the same time, they point to the role of technologies in constructing the physical and material constraints upon plausible forms of life:

Understanding technology, then, means understanding a form of life, and this includes technique and the use of all kinds of tools—linguistic, material, and others. Then the main question for a Wittgensteinian philosophy of technology applied to technology development and innovation is: what will the future forms of life, including new technological developments, look like, and how might this form of life be related to historical and contemporary forms of live? [sic] (p 179)

It is important though to be attentive to the different properties of different kinds of tools in use (linguistic, material, technological) within any form of life. Mass digital technologies, in particular, appears to spread in less negotiable ways: that is, some new technology introduced, whilst open to be embedded in forms of life in some subtly different ways, often has core features presented only on a take-it-or-leave-it basis, and, once introduced, can be relatively brittle and resistant to shaping by its users.

So – as new technologies are introduced, we may find that they reconfigure the social and material bounds of our current forms of life, whilst also introducing new language games, or new rules to existing games into our social settings. And with contemporary AI technologies in particular, a number of specific concerns may arise.

AI Concerns and Critical Responses

Before we consider how AI might affect our forms of life, a few further observations (and statements of value):

- The plural of ‘forms’ is intentional. There are variations in the forms of life lived across our planet. Social agreements in behaviour and action vary between cultural settings, regions or social strata. Many humans live between multiple forms of life, translating in word and behaviour between the different meanings each requires. Multiple forms are not strictly dichotomous: different forms of life may have many resemblances, but their distinctions matter and should be valued (this is an explicit political statement of value on my part).

- There have been a number of social projects to establish certain universal forms of life over past centuries. For example, the development of consensus on human rights frameworks is one of these. seeking equitable treatment of all (I also personally subscribe to the view that a high level of respect for universal human rights should feature as a constraint to all forms of life).

- Within this trend, there are also a number of significant projects seeking to establish greater acceptance of different ways of living, including action to reverse the victorian imposition of certain normative family structures, work to afford individuals greater autonomy in defining their own identities, and activity to embed much more ecological models of thinking about human society.

These trends (or ongoing social struggles if you like) seeking to make our ways of living more tolerant, open, inclusive and sustainable are important to note when we consider the rise of AI systems. Such systems are frequently reliant on categorised data, and on a reductive modelling of the human experience based on past, rather than prospective, data.

This noted, it appears then that we might point to two distinct forms of concern about AI:

(A) The use of algorithmic systems, built on reductive data, risks ossifying past ways of life (with their many injustices), rather than supporting struggles for social justice that involve ongoing efforts to renegotiate the meaning of certain categories and behaviours.

(B) Algorithmic systems may embody particular ways of life that, because of the power that can be exercised through their pervasive operation, cause those forms of life to be imposed over others. This creates pressure for humans to adapt their ways of life to fit the machine (and its creators/owners), rather than allowing the adaptation of the machine to fit into different human ways of life.

Brief examples

Gender detection software is AI trained to judge the gender of a person from an image (or from analysing names, text or some other input). In general, such systems define gender using a male-female binary. Such systems are being widely used in research and industry. Yet, at the same time the task of judging gender is being passed from human to machine, there are increasingly present ways of life that reject the equation of gender and sex identity, and the idea of a fixed gender-binary. The introduction of AI here risks the ossification of past social forms.

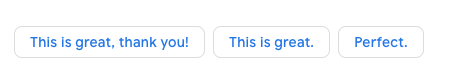

Predictive text tools are increasingly being embedded in e-mail and chat clients to suggest one-click automatic responses, instead of requiring the human to craft a written response. Such AI-driven features are at once a tool of great convenience, but also an imposed shift in our patterns of social interaction.

Such forms of ‘social robot’ are addressed by Coeckelbergh & Funk when they write: “These social robots become active systems for verbal communication and therefore influence human linguistic habits more than non-talking tools.” (p 185). But note the material limitations of these robots: they can’t construct a full sentence representative of their user. Instead, they push conversation towards the quick short response, creating a pressure to change patterns of human interaction.

The examples above suggested by gmail for me to use in reply to a recent e-mail might follow terms I’d often use, but push towards a form of e-mail communication that, at least in my experience, represents a particularly capitalist and functional form of life, in which speed of communication is of the essence, rather than social communication and exploration of ideas.

Reflections and responses

Wittgenstein was not a social commentator, but it is possible to draw upon his ideas to move beyond conversations about AI bias, to look at how the widespread introduction of algorithmic and machine-learning driven systems may interact with different contemporary forms of living.

I’m always interested though in the critical leading to the practical, and so below I’ve started to sketch out possible responses the analysis above leads me to consider. I also strongly suspect that these responses, and justification for them, can be elaborated much more directly and accessibility without getting here via Wittgenstein. Writing that may be a task for later, but as I came here via the Wittgensitinian route, I’ll stick with it.

(1) Find better categories

If we want future algorithmic systems to represent the forms of live we want to live, not just those lived in the past, or imposed upon populations, we need to focus on the categories and data structured used to describe the world and train machine-learning systems.

The question of when we can develop global categories that have meaning that is ‘good enough’ in terms of alignment in use across different settings, and when it is important to have systems that can accommodate more localised categorisations, is one that requires detailed work, and that is inherent political.

(2) Build a better machine

Some objects to particular instances of AI may be because it is, ultimately, too blunt in its current form. Would my objection to the predictive text tools be the same if they could express more complete sentences, more in line with the way I want to communicate? For many critiques of algorithmic systems, there may be a plausible response to suggest that a better designed or trained system could address the problem raised.

I’m sceptical however, of whether it is plausible for most current instantiations of machine-learning to be adaptable enough to different forms of life: not least on the grounds that for some ways of living the sample-size may be too small to gather enough data points to construct a good model, or the collection of the data required may be too expensive or intrusive for theoretical possibilities of highly adaptive machine-learning systems to be practically feasible or desirable.

(3) Strategic rejection

Recognising the economic and political power embedded in certain AI implementations, and the particular form of life it embodies, may help us to see technologies we want to reject outright. If a certain tool makes moves in a language game that are at odds with the game we want to be playing, and only gains agreement of action through its imposition, then perhaps we should not admit it at all.

To put that more bluntly (and bringing in my own political stance), certain AI tools embody a late-capitalist form of life, rooted in cultures and practices of a small strata of Silicon Valley. Such tools should have no place in shaping other ways of life, and should be rejected not because they are biased, or because they have not adequately considered issues of privacy, but simply because the form of life they replicate undermines both equality and ecology.

Where next

Over my time here at Bellagio, I’ll be particularly focussed on the first of these responses – seeking better categories, and understanding how processes of standardisation interact with AI. My goal is to do that with more narrative, and less abstraction, but we shall see…

Hi Tim, vey much enjoyed your piece. I read Max Tegmarks ‘Life 3’ book on AI last year which I recommend. Hes a advocate for beneficial AI. With out rereading or slimming I cant summarise well but some of my lasting takes were that Tegmark thinks some kind of conscious general AI is inevitable (not if but when) and therefore better to exlplore and develop the neccessary ethical and legal controls sooner rather latter. And yes as you have detected I think Tegmarks analysis demonstrates only partially examined bias towards evaluations based on late forms of capitalism…charismatic billionaire olligarchs leading as opposed to governments, default silicon valley tech think language, neo liberalism and techno utopian versions of the future. Chris Jockel

I haven’t read Wittgenstein either, but I wonder why the industry is adopting his philosophy (1920’s mind-set?) to justify their work. I think the word ‘Life’ shouldn’t apply at all. This is ‘mechanisation’ and ‘tools’. As a Human Ecologist, I feel that we in our culture are already machine dependant ‘denatured humans’, and need to reverse or halt this process of thinking. The AI industry justifying their work to be included in ‘life’ and ‘culture’, is a false dream, and I agree with you, it diminishes real ecological life further.

E.M.Forster wrote a nice little fiction – ‘The Machine Stops’. I think it is definitely worth you arguing about consequences, and I hope you can find some references for that. Did Wittgenstein do consequential thinking?

The Dutch are starting to grow tomatoes using AI. That might show that the lack of a human touch or natural air has some deleterious impact on growth for all we know – I don’t suppose that will be a research question, though. We know from agriculture that intensification doesn’t work. Same applies to the AI industry, no?

Have fun. Liz